Reference architectures (FREE SELF)

You can set up GitLab on a single server or scale it up to serve many users. This page details the recommended Reference Architectures that were built and verified by the GitLab Quality and Support teams.

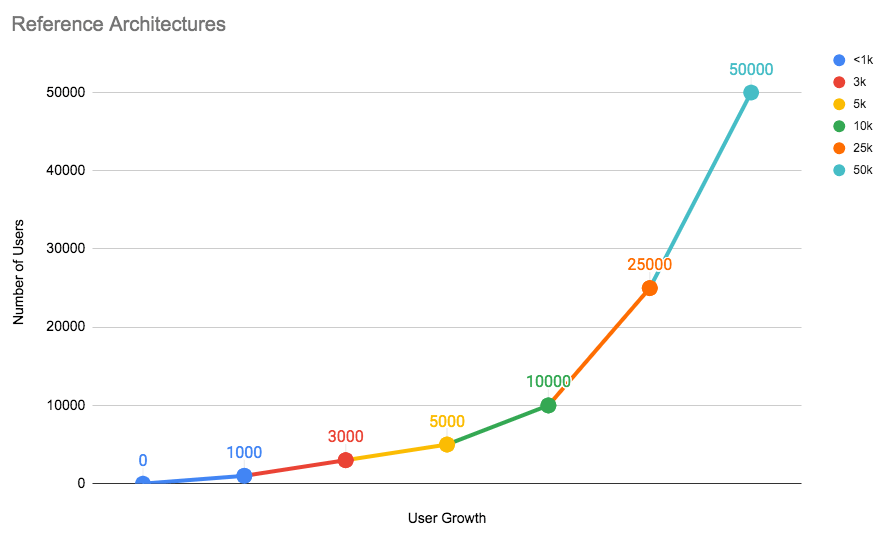

Below is a chart representing each architecture tier and the number of users they can handle. As your number of users grow with time, it's recommended that you scale GitLab accordingly.

Testing on these reference architectures was performed with the GitLab Performance Tool at specific coded workloads, and the throughputs used for testing were calculated based on sample customer data. Select the reference architecture that matches your scale.

Each endpoint type is tested with the following number of requests per second (RPS) per 1,000 users:

- API: 20 RPS

- Web: 2 RPS

- Git (Pull): 2 RPS

- Git (Push): 0.4 RPS (rounded to nearest integer)

For GitLab instances with less than 2,000 users, it's recommended that you use the default setup by installing GitLab on a single machine to minimize maintenance and resource costs.

If your organization has more than 2,000 users, the recommendation is to scale the GitLab components to multiple machine nodes. The machine nodes are grouped by components. The addition of these nodes increases the performance and scalability of to your GitLab instance.

When scaling GitLab, there are several factors to consider:

- Multiple application nodes to handle frontend traffic.

- A load balancer is added in front to distribute traffic across the application nodes.

- The application nodes connects to a shared file server and PostgreSQL and Redis services on the backend.

NOTE: Depending on your workflow, the following recommended reference architectures may need to be adapted accordingly. Your workload is influenced by factors including how active your users are, how much automation you use, mirroring, and repository/change size. Additionally the displayed memory values are provided by GCP machine types. For different cloud vendors, attempt to select options that best match the provided architecture.

Available reference architectures

The following reference architectures are available:

- Up to 1,000 users

- Up to 2,000 users

- Up to 3,000 users

- Up to 5,000 users

- Up to 10,000 users

- Up to 25,000 users

- Up to 50,000 users

The following Cloud Native Hybrid reference architectures, where select recommended components can be run in Kubernetes, are available:

- Up to 2,000 users

- Up to 3,000 users

- Up to 5,000 users

- Up to 10,000 users

- Up to 25,000 users

- Up to 50,000 users

A GitLab Premium or Ultimate license is required to get assistance from Support with troubleshooting the 2,000 users and higher reference architectures. Read more about our definition of scaled architectures.

Availability Components

GitLab comes with the following components for your use, listed from least to most complex:

- Automated backups

- Traffic load balancer

- Zero downtime updates

- Automated database failover

- Instance level replication with GitLab Geo

As you implement these components, begin with a single server and then do backups. Only after completing the first server should you proceed to the next.

Also, not implementing extra servers for GitLab doesn't necessarily mean that you'll have more downtime. Depending on your needs and experience level, single servers can have more actual perceived uptime for your users.

Automated backups

- Level of complexity: Low

- Required domain knowledge: PostgreSQL, GitLab configurations, Git

This solution is appropriate for many teams that have the default GitLab installation. With automatic backups of the GitLab repositories, configuration, and the database, this can be an optimal solution if you don't have strict requirements. Automated backups is the least complex to setup. This provides a point-in-time recovery of a predetermined schedule.

Traffic load balancer (PREMIUM SELF)

- Level of complexity: Medium

- Required domain knowledge: HAProxy, shared storage, distributed systems

This requires separating out GitLab into multiple application nodes with an added load balancer. The load balancer will distribute traffic across GitLab application nodes. Meanwhile, each application node connects to a shared file server and database systems on the back end. This way, if one of the application servers fails, the workflow is not interrupted. HAProxy is recommended as the load balancer.

With this added component you have a number of advantages compared to the default installation:

- Increase the number of users.

- Enable zero-downtime upgrades.

- Increase availability.

For more details on how to configure a traffic load balancer with GitLab, you can refer to any of the available reference architectures with more than 1,000 users.

Zero downtime updates (PREMIUM SELF)

- Level of complexity: Medium

- Required domain knowledge: PostgreSQL, HAProxy, shared storage, distributed systems

GitLab supports zero-downtime upgrades. Single GitLab nodes can be updated with only a few minutes of downtime. To avoid this, we recommend to separate GitLab into several application nodes. As long as at least one of each component is online and capable of handling the instance's usage load, your team's productivity will not be interrupted during the update.

Automated database failover (PREMIUM SELF)

- Level of complexity: High

- Required domain knowledge: PgBouncer, Patroni, shared storage, distributed systems

By adding automatic failover for database systems, you can enable higher uptime with additional database nodes. This extends the default database with cluster management and failover policies. PgBouncer in conjunction with Patroni is recommended.

Instance level replication with GitLab Geo (PREMIUM SELF)

- Level of complexity: Very High

- Required domain knowledge: Storage replication

GitLab Geo allows you to replicate your GitLab instance to other geographical locations as a read-only fully operational instance that can also be promoted in case of disaster.

Deviating from the suggested reference architectures

As a general guideline, the further away you move from the Reference Architectures, the harder it will be get support for it. With any deviation, you're introducing a layer of complexity that will add challenges to finding out where potential issues might lie.

The reference architectures use the official GitLab Linux packages (Omnibus GitLab) or Helm Charts to install and configure the various components. The components are installed on separate machines (virtualized or bare metal), with machine hardware requirements listed in the "Configuration" column and equivalent VM standard sizes listed in GCP/AWS/Azure columns of each available reference architecture.

Running components on Docker (including Compose) with the same specs should be fine, as Docker is well known in terms of support.

However, it is still an additional layer and may still add some support complexities, such as not being able to run strace easily in containers.

Other technologies, like Docker swarm are not officially supported, but can be implemented at your own risk. In that case, GitLab Support will not be able to help you.

Supported modifications for lower user count HA reference architectures

The reference architectures for user counts 3,000 and up support High Availability (HA).

In the specific case you have the requirement to achieve HA but have a lower user count, select modifications to the 3,000 user architecture are supported.

For more details, refer to this section in the architecture's documentation.

Testing process and results

The Quality Engineering - Enablement team does regular smoke and performance tests for the reference architectures to ensure they remain compliant.

In this section, we detail some of the process as well as the results.

Note the following about the testing process:

- Testing occurs against all main reference architectures and cloud providers in an automated and ad-hoc fashion.

This is achieved through two tools built by the team:

- The GitLab Environment Toolkit for building the environments.

- The GitLab Performance Tool for performance testing.

- Network latency on the test environments between components on all Cloud Providers were measured at <5ms. Note that this is shared as an observation and not as an implicit recommendation.

- We aim to have a "test smart" approach where architectures tested have a good range that can also apply to others. Testing focuses on 10k Omnibus on GCP as the testing has shown this is a good bellwether for the other architectures and cloud providers as well as Cloud Native Hybrids.

- Testing is done publicly and all results are shared.

Τhe following table details the testing done against the reference architectures along with the frequency and results. Note that this list above is non exhaustive. Additional testing is continuously evaluated and iterated on, and the table is updated accordingly.

| Reference Architecture Size |

Bare-Metal | GCP | AWS | Azure |

|---|---|---|---|---|

| 1k | Refer to GCP1 | Standard - Weekly1 | - | - |

| 2k | Refer to GCP1 | Standard - Weekly1 | - | - |

| 3k | Refer to GCP1 | Standard - Weekly1 | - | - |

| 5k | Refer to GCP1 | Standard - Weekly1 | - | - |

| 10k | Refer to GCP1 |

Standard - Daily1 Standard (inc Cloud Services) - Ad-Hoc Cloud Native Hybrid - Ad-Hoc |

Standard (inc Cloud Services) - Ad-Hoc Cloud Native Hybrid - Ad-Hoc |

Standard - Ad-Hoc |

| 25k | Refer to GCP1 | Standard - Weekly1 | - | Standard - Ad-Hoc |

| 50k | Refer to GCP1 | Standard - Weekly1 | Standard (inc Cloud Services) - Ad-Hoc | - |

- The Standard Reference Architectures are designed to be platform agnostic, with everything being run on VMs via Omnibus GitLab. While testing occurs primarily on GCP, ad-hoc testing has shown that they perform similarly on equivalently specced hardware on other Cloud Providers or if run on premises (bare-metal).